Physics-directed data augmentation for deep model transfer to specific sensor

PhyAug Overview

PhyAug OverviewAbstract

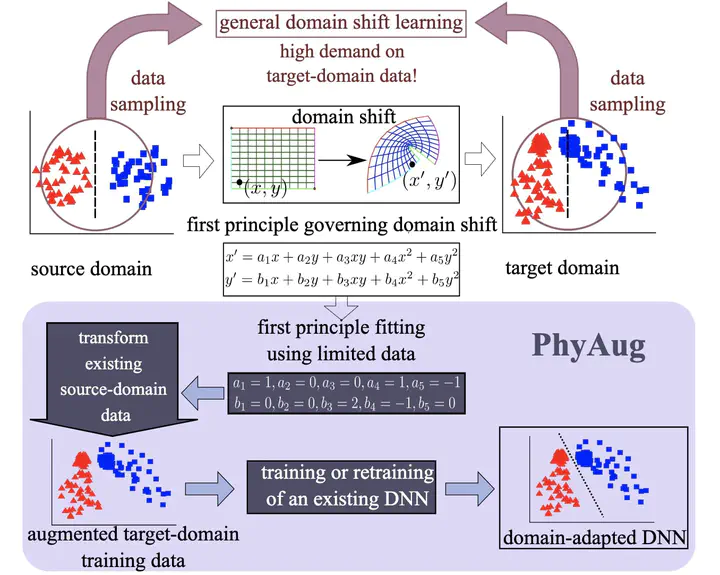

Run-time domain shifts from the training phase caused by sensor characteristic variation incur performance drops of the deep learning-based sensing systems. To address this problem, existing transfer learning techniques require substantial target-domain data and incur high post-deployment overhead. Differently, we propose to exploit the first principle governing the domain shift to reduce the demand for target-domain data. Specifically, our proposed approach called PhyAug uses the first principle fitted with few labeled or unlabeled data pairs collected by the source sensor and the target sensor to transform the existing source-domain training data into the augmented target-domain data for calibrating the deep neural networks. In two audio sensing case studies of keyword spotting and automatic speech recognition, PhyAug recovers the recognition accuracy losses due to microphones’ characteristic variations by 37% to 72% with 5-second unlabeled data collected from the target microphones. In a case study of acoustics-based room recognition, PhyAug recovers the recognition accuracy loss caused by smartphone microphone variation by 33% to 80%. In the last case study of fisheye image recognition, PhyAug reduces the image recognition error due to the camera-induced distortions by 72%.